Practical drone tools for water management: Choosing the right system, sensors, and workflow

Part 2 of the series ‘Seeing the Stress from Above’

Drones are transforming water management in Mid-South cropping systems by detecting stress patterns before they are visible on the ground. This article walks agronomists and crop consultants through practical UAV platforms, sensors, and workflows that turn aerial imagery into actionable insights for irrigation, drainage, and crop health decisions. This is the second article in our “Seeing the Stress from Above” series.

Earn 1 CEU in Soil & Water Management by reading the article and taking the quiz.

Unmanned aerial vehicles (UAVs or drones) offer a practical way to monitor crop water status across entire fields and to identify emerging problems before stress reduces yield. Thermal, multispectral, and RGB sensors can detect water stress, quantify canopy vigor, and highlight areas affected by flooding, compaction, or variable soil conditions. Yet drones often appear complicated to agronomists and crop consultants because the technology is associated with robotics, imaging science, and data processing. This article aims to close that gap by translating UAV imaging for water management from a promising concept into a simple and field ready workflow.

Selecting UAV for water management in Mid-South production systems

Drones used in agriculture fall broadly into three categories: multirotor, fixed-wing, and hybrid airframes. They differ in price, endurance, payload options, ease of operation, and the type of fields and management questions they are best suited to (Chakhvashvili et al., 2024).

Multirotor drones (often quad- or hexacopters) are currently the most common platforms in crop production. Their rotary propellers allow vertical takeoff and landing, hovering, and slow, precise flight paths. This makes them effective in small or irregular fields and for targeted scouting within larger farms.

Because multirotors can fly slowly and close to the canopy, they collect very high-resolution RGB, multispectral, or thermal imagery over specific zones. Multirotor platforms cover less ground per battery than fixed wings but offer greater precision and the ability to hover over problem areas. Typical flight times range from about 20 to 45 minutes per battery, and they tend to be more sensitive to strong winds.

Common agricultural multirotors in the United States include the DJI Mavic 3 Multispectral for field-scale mapping and the Matrice 300 or 350 for heavier sensors and thermal imaging. Non-DJI alternatives such as the Parrot Anafi USA, the Autel Evo II Pro and the Evo II Dual 640T, and the Skydio 2 Plus are also used where operators prefer non-DJI hardware.

Fixed-wing drones resemble small airplanes with wings that generate lift during forward motion, reducing the energy required to stay airborne. As a result, they offer long endurance and large area coverage per flight. Fixed-wing systems can map hundreds of acres in a single mission and generally tolerate wind better than small multirotors.

The trade-offs are that they cannot hover, require more open space for launch and landing, and typically fly higher to cover large areas. As a result, their ground resolution is coarser than that of a multirotor but still sufficient for many water management applications.

Fixed wings are especially effective for whole-field water uniformity diagnostics, drought mapping, and images for same-day decisions. Platforms such as the senseFly eBee Ag can cover approximately 100 to 160 acres in under 20 minutes at 260 to 400 ft above ground level, making them valuable for applications where total area is more important than very fine spatial detail.

Hybrid drones combine the vertical takeoff and landing capability of multirotors with the efficient cruise of fixed wings. This design allows them to operate from small or uneven field edges while still achieving longer flight times and broader coverage than most multirotor platforms. Hybrids are particularly useful in large fields that are surrounded by trees, powerlines, or farm infrastructure where launching a traditional fixed wing is difficult and where operations need both broad coverage and the ability to slow down for closer inspection.

Common hybrid systems in the United States include the WingtraOne Gen II and the Quantum Systems Trinity F90 Plus. These platforms offer excellent endurance and mapping efficiency but at higher cost and with slightly more complex operation. Hybrids usually cannot carry the same wide range of sensors offered for large multirotor platforms.

In practice, the “right” platform depends on field size, field shape, and management goals. Multirotors excel in small or irregular fields, stand counts, and targeted scouting for compaction, drainage, or irrigation issues. Fixed wings are the preferred choice for large areas, rapid coverage, and the quick generation of results that can guide field decisions. Hybrids fill the gap for medium to large operations that need both multirotor convenience and fixed-wing efficiency.

Many operations ultimately use a combination of platforms, relying on multirotors for high-resolution troubleshooting and fixed wings or hybrids for routine field mapping. Table 1 summarizes the key operational characteristics of each UAV platform.

| Airframe type | Key strengths | Limitations | Best uses |

|---|---|---|---|

| Multirotor | High-resolution imaging at low altitude; ability to hover; simple launch and landing | Short flight time; limited coverage; wind sensitive | Small/irregular fields, stand counts, targeted scouting for compaction, drainage or irrigation issues |

| Fixed wing | Long endurance; large area coverage per flight; good wind handling | Needs open launch/landing area; coarser ground resolution; cannot hover | Large row-crop fields; rapid whole-field mapping; drought and flood stress surveys |

| Hybrid | Vertical takeoff with efficient fixed-wing cruise; good coverage from tight field edges | More complex and expensive; limited payload options | Medium to large fields; fields requiring both coverage and closer inspection |

Sensor options and what they reveal about water stress

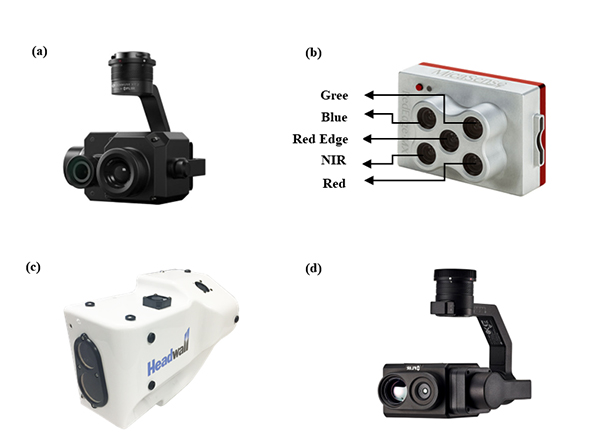

Choosing an appropriate sensor is just as important as selecting the airframe. Different sensors “see” different aspects of the crop–soil system and provide complementary information about water status, biomass, canopy structure, and stress.

RGB cameras produce color images similar to what the eye sees but at much higher spatial resolution than satellite imagery. They are the most affordable and widely available sensors on agricultural drones, and despite lacking the spectral detail of multispectral systems, they remain essential for day-to-day crop scouting (Niu et al., 2024; Sweet et al., 2022). Once processed into orthomosaics, high-resolution RGB imagery can reveal bare soil patches, compaction streaks, lodging, and stand gaps. Many of these features are closely related to zones where canopy cover is uneven, which may result from either excess or insufficient water.

Subtle changes in canopy structure, such as thinning, uneven height, or irregular row closure, often reflect differences in soil moisture or early stress. RGB imagery also plays an important role in multisensor workflows because it helps mask non-crop pixels in thermal and multispectral maps, improving canopy extraction and reducing noise.

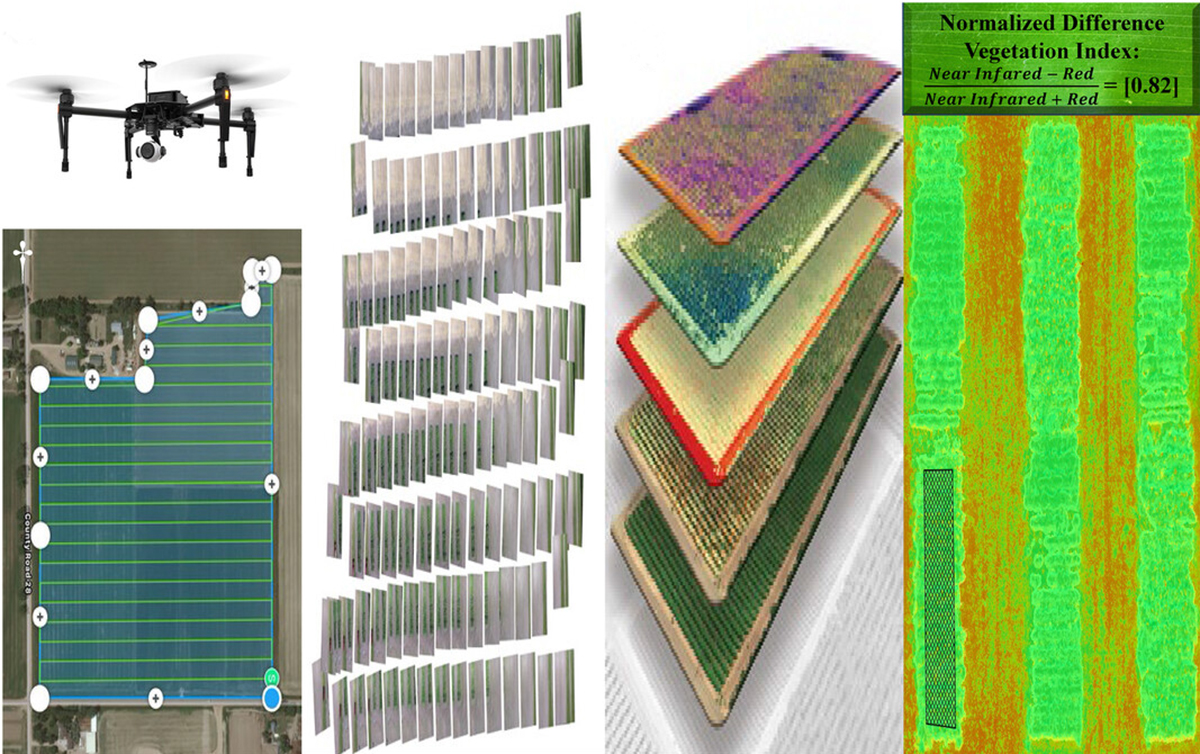

Multispectral cameras capture reflectance in discrete spectral bands, typically blue, green, red, red edge, and near-infrared (NIR), with the last two not visible to the human eye. Healthy vegetation absorbs red light and strongly reflects NIR while stressed plants tend to reflect more red and less NIR. These patterns form the basis of vegetation indices such as NDVI, which ranges from −1 to +1 and is widely used to assess canopy density and vigor. Because NDVI integrates leaf area and chlorophyll content, it reflects overall plant health and can indicate when water availability is affecting growth before symptoms such as wilting or discoloration are visible.

For water management, multispectral imagery helps track crop recovery after drought or flooding and identify zones with reduced biomass or early stress caused by shallow water tables, compaction, or persistent waterlogging (Danzi et al., 2022). Common multispectral sensors used in agricultural UAV platforms include the MicaSense RedEdge and Altum series, the DJI Mavic 3 Multispectral, and Sentera’s multispectral payloads, all of which provide reliable reflectance data suited for routine crop monitoring.

Thermal cameras measure long-wave infrared radiation emitted by plant and soil surfaces and convert it into temperature estimates. Well-watered plants stay cooler while water-stressed plants warm up as stomata close. This makes thermal imaging a sensitive early indicator of drought stress, often detecting changes before wilting or color differences appear. Thermal maps are also useful for evaluating irrigation performance since under-irrigated areas appear warmer and zones affected by drainage problems or standing water tend to appear cooler due to evaporative effects (Park et al., 2021). Thermal sensors range from compact radiometric sensors (e.g., FLIR-based modules) to integrated systems such as the DJI Zenmuse H20T/H30T.

Some of the most useful insights come from combining information from different sensors in a single workflow. Thermal imagery can highlight hot spots where plants are warming up under water stress. Multispectral indices such as NDVI can show whether those same areas also have reduced biomass or chlorophyll. RGB imagery adds context by revealing stand gaps, residue patterns, or physical damage that might explain part of the problem. When viewed together, these layers provide a clearer picture of what is happening in the crop and help separate true water stress from other causes of low vigor.

Practical drone tools for water management: From images to decisions

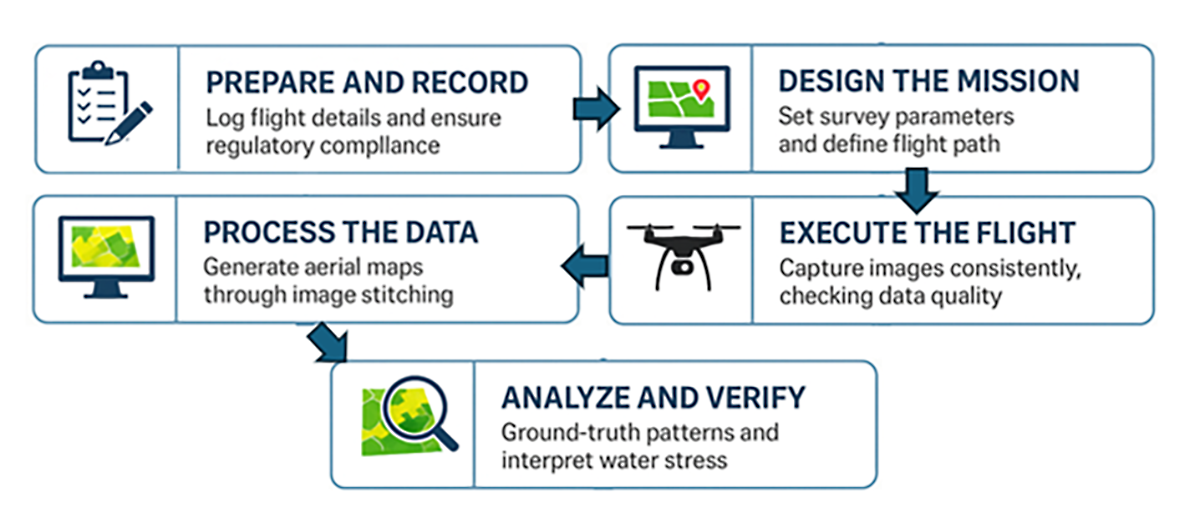

A simple, repeatable workflow (Figure 1) helps ensure that UAV imagery consistently turns into actionable advice rather than a collection of disconnected maps. Reliable decisions start with reliable data, and good UAV imagery depends on following a sequence of well-designed steps before, during, and after the flight.

Pre-flight compliance and documentation

Effective UAV use begins long before takeoff. Each flight should be framed by a clear operational record: the date, field name, crop stage, purpose of the mission, and expected outcomes. Maintaining a logbook allows agronomists to compare imagery across weeks or seasons and track how stress evolves in response to irrigation or rainfall.

Regulatory compliance is equally important. Operators must ensure that all flights meet FAA Part 107 requirements, including maintaining visual line of sight, staying at or below 400 ft above ground level, avoiding restricted airspace, and keeping current pilot certification. Documenting these elements establishes consistency, ensures safety, and provides a transparent history of decisions made using drone data.

Mission planning and sensor selection

Good maps begin with thoughtful planning, and that starts with defining the agronomic question, whether the goal is to identify where irrigation is falling short, assess drought recovery, look for waterlogging after heavy rain, or diagnose areas of chronically low vigor. Once the objective is clear, the operator can select the appropriate airframe and sensor, such as a multirotor with thermal capability for detailed irrigation troubleshooting or a fixed wing with multispectral imaging for whole-field surveys.

Mission-planning software makes it easy to outline field boundaries, set altitude and image overlap, and schedule consistent automated grids. Flights between about 200 and 400 ft generally balance detail and coverage, and overlaps of roughly 80% forward and 70% side to side help ensure clean mosaics.

Timing matters as much as design. Thermal imagery is most reliable near midday when canopy and air temperatures differ the most while multispectral and RGB imagery benefit from stable, even illumination during mid-morning or early afternoon. Flights taken immediately after irrigation can hide true stress because wet soil cools the canopy, so many agronomists prefer to image the day before irrigation or several hours after watering has ended.

Collect consistent imagery

Once the mission is planned, the focus shifts to flying with consistency and control. Automated grid or double grid patterns help the drone cover the field uniformly and reduce operator error. During flight, the operator should watch wind speed, battery levels, and live image previews to confirm that exposure and sharpness remain stable. Most mid-flight issues can be prevented with basic pre-flight checks, such as inspecting batteries, propellers, and gimbals; formatting memory cards; updating firmware; and calibrating sensors when required. Multispectral cameras should capture a calibration panel at the start of the mission, and for multisensor flights, verifying that all cameras are recording avoids gaps in the dataset. A short early-season test flight is often enough to catch problems with focus, shutter speed, or motion blur before large-scale campaigns begin.

Stable lighting, low wind, and dry conditions help produce clean data, and multispectral and RGB systems generally tolerate variable humidity better than thermal sensors, which are more sensitive to atmospheric interference.

Convert images into maps

Once the flight is complete, the raw images must be converted into usable map layers. Photogrammetry software stitches overlapping photos into a single orthomosaic, a corrected top-down image that preserves accurate scale and geometry across the field. Tools such as Pix4Dfields, DJI SmartFarm, and Sentera FieldAgent streamline this process and can generate index layers within minutes for moderate-sized fields, making it possible to review results directly at the field edge.

When greater control is needed, Pix4Dmapper or Agisoft Metashape provide advanced settings for fine-tuning reconstruction. Thermal imagery requires additional steps such as specifying emissivity and applying basic atmospheric corrections. Aligning thermal maps with RGB imagery helps isolate the canopy and reduces noise in canopy-temperature estimates.

Open-source tools like OpenDroneMap and MicMac offer no-cost alternatives for producing orthomosaics and surface models, and QGIS can be used to visualize maps and compute simple indices.

Spatial interpretation and field validation

Maps are most valuable when interpreted through agronomic context. Normalized Difference Vegetation Index (NDVI) and other indices highlight differences in vigor but cannot alone distinguish drought from nutrient deficiency, pest damage, or poor emergence. Thermal maps point to hot spots but may also reflect sandy soils, shallow rooting, or recent tillage. For this reason, drone imagery should be treated as a guide for targeted scouting rather than a stand-alone diagnosis. The most reliable approach is to examine the spatial patterns in the imagery, identify representative areas, and then visit those locations in the field using GPS-linked maps. Comparing canopy appearance, soil moisture, residue distribution, and pest or nutrient symptoms with the patterns seen from the drone helps confirm or refine the interpretation.

The most reliable approach is to examine the spatial patterns in the imagery, identify representative areas, and then visit those locations in the field using GPS-linked maps.

Layer combinations strengthen the diagnosis: thermal plus NDVI helps differentiate heat-driven stress from low biomass while NDVI overlaid with soil or elevation maps helps link vigor patterns to soil variation or drainage conditions.

By following a consistent workflow like this, Mid-South agronomists can move from simply “trying out a drone” to integrating UAV imaging as a routine and dependable part of their irrigation and drainage management strategy. The result is not just more data, but clearer decisions made with greater confidence and fewer assumptions.

Drone imaging has moved from a research novelty to a practical, field-ready tool for managing water in cropping systems. When the right platform and sensors are matched to field size and management goals, and when flights are planned with proper altitude, overlap, timing, and weather conditions, UAV data can reveal water stress patterns long before they are visible from the ground. Thermal imagery collected near midday and multispectral or RGB imagery collected under stable lighting provide reliable maps of drought stress, irrigation performance, and canopy vigor.

When these layers are combined with soil, elevation, and field knowledge—and verified through targeted ground-truthing—agronomists can separate true water stress from nutrient, pest, or soil-related problems. User-friendly processing tools make these workflows accessible, allowing drones to extend, rather than replace, agronomic expertise. Used consistently, UAV imagery supports clearer, more confident decisions and enables responsive, data-driven water management across the Mid-South.

Chakhvashvili, E., Machwitz, M., Antala, M., Rozenstein, O., Prikaziuk, E., Schlerf, M., … & Rascher, U. (2024). Crop stress detection from UAVs: Best practices and lessons learned for exploiting sensor synergies. Precision Agriculture, 25, 2614–2642. https://doi.org/10.1007/s11119-024-10168-3

Danzi, D., De Paola, D., Petrozza, A., Summerer, S., Cellini, F., Pignone, D., & Janni, M. (2022). The use of near-infrared imaging (NIR) as a fast non-destructive screening tool to identify drought-tolerant wheat genotypes. Agriculture, 12(4), 537. https://doi.org/10.3390/agriculture12040537

Niu, S., Nie, Z., Li, G., & Zhu, W. (2024). Early drought detection in maize using UAV images and YOLOv8+. Drones, 8(5), 170. https://doi.org/10.3390/drones8050170

Park, S., Ryu, D., Fuentes, S., Chung, H., O’Connell, M., & Kim, J. (2021). Dependence of CWSI-based plant water stress estimation with diurnal acquisition times in a nectarine orchard. Remote Sensing, 13(14), 2775. https://doi.org/10.3390/rs13142775

Sweet, D. D., Tirado, S. B., Springer, N. M., Hirsch, C. N., & Hirsch, C. D. (2022). Opportunities and challenges in phenotyping row crops using drone-based RGB imaging. The Plant Phenome Journal, 5(1), e20044. https://doi.org/10.1002/ppj2.20044

Self-study CEU quiz

Earn 1 CEU in Soil & Water Management by taking the quiz for the article. For your convenience, the quiz is printed below. The CEU can be purchased individually, or you can access as part of your Online Classroom Subscription.

1. Which type of drone is most commonly used in crop production?

a. Fixed-wing.

b. Multirotor.

c. Hybrid.

d. Satellite drone.

2. What type of sensor produces images similar to what the human eye sees?

a. RGB camera .

b. Thermal.

c. Multispectral.

d. Infrared-only.

3. What does NDVI primarily measure?

a. Soil moisture.

b. Crop height.

c. Air temperature.

d. Vegetation health and vigor .

4. What is a key advantage of multirotor drones?

a. Long flight endurance.

b. Covering hundreds of acres quickly.

c. Low cost of sensors.

d. Ability to hover and capture high-resolution images.

5. What is a limitation of fixed-wing drones?

a. Cannot fly in wind.

b. Poor battery life.

c. Cannot hover.

d. Expensive sensors only.

6. Hybrid drones combine features of which two types?

a. Satellite and multirotor.

b. Fixed-wing and satellite.

c. Multirotor and fixed-wing.

d. Thermal and RGB drones.

7. Healthy vegetation typically reflects more of which type of light?

a. Red.

b. Blue.

c. Near-infrared (NIR).

d. Ultraviolet.

8. What does thermal imaging detect in crops?

a. Temperature differences indicating water stress in plants.

b. Variations in leaf color and pigment levels across the canopy.

c. Differences in soil nutrient availability and composition patterns.

d. Changes in plant species distribution across the field.

9. When are thermal images most reliable?

a. Early morning.

b. Midday.

c. Midnight.

d. During rainfall.

10. What is an orthomosaic?

a. A 3D model of soil layers.

b. A stitched, corrected top-down image of a field.

c. A thermal-only image.

d. A drone flight path.

Text © . The authors. CC BY-NC-ND 4.0. Except where otherwise noted, images are subject to copyright. Any reuse without express permission from the copyright owner is prohibited.